Osmos, Updates, and Floating-Point Determinism

We just released Osmos 2.4.0 on iOS – our first release in almost 4 years. Why the long hiatus? Was it because 2.3.1, our previous release, was perfect? No, though it was solid and stable, and we were happy with it. Rather, it was due to our reliance on “floating-point determinism” for multiplayer. And for years afterwards I thought it might be the last version we ever released on iOS.

Desynchronization: A Little Osmos Multiplayer History

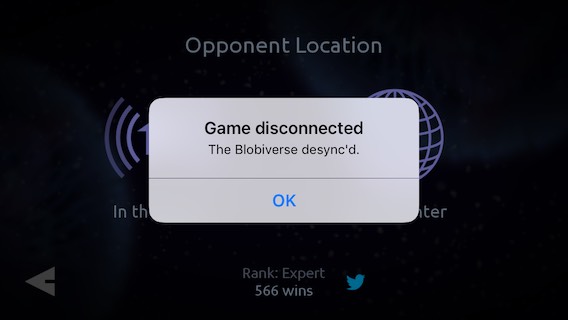

It’s 2013. Miley Cyrus is wrecking stuff in her underwear. I was about to submit version 2.3.1 to Apple for approval. This was a minor update from 2.3.0 – just a few fixes. We did a little testing, and all seemed solid. Then, just before submitting, we got a “blobiverse desync” error during a multiplayer test.

What, you may ask, is a “blobiverse desync”? Well at the time it was something I never expected – nor wanted – to see again.

It’s 2011. Everyone is getting into Minecraft. Aaron and I were working hard on creating a multiplayer mode for Osmos – a surprisingly ambitious and tricky project. One technical question we faced was how to synchronize game state between devices over a network. There are a lot of moving blobs in Osmos: It takes roughly 7 kb to represent the game’s state at any given moment. Multiply that by a decent framerate, and the network bandwidth required to stream Osmos state across a network gets into video streaming territory. We wanted to avoid putting that kind of load on people’s networks and devices. Instead, if we could simulate the game locally and identically on each device, transmitting only player input (mass-firing, mainly) across the network, the bandwidth requirements would be minimal. We decided to go that route.

We encountered and solved so many technical challenges in our development of Osmos multiplayer, many of which I found super interesting, but I want to focus on just one here: simulation determinism. It’s actually a huge subject, so I’ll just touch on a couple bits and zoom in on an even narrower subject: floating-point determinism.

For starters, a titch about simulating physics on computer. If you’re familiar with the term “lockstep simulation” – one of the things we implemented for Osmos multiplayer – feel free to skip the rest of this paragraph. Generally, physics is simulated a frame at a time: calculate, step; calculate, step; and so on. If you do it right, the smaller your time-step is (the time from frame to frame) the more precise your results will be. Precision aside, it’s important here to note that a different time-step will give you different results. For example, simulating one second in 60 steps (1/60th of a second per step) will give you a different result than simulating that same second in 30 steps (at 1/30th of a second per step). But so long as the framerate is decent and things are stable, these differences don’t get noticed by most players. In single-player Osmos we simply run as many frames as we can per second. If your device can handle it, this means the game will run at 60 fps, bound by the refresh rate of your display. But for various reasons some frames end up taking longer than others, sometimes due to the complexity of what’s happening in game, and sometimes due to what else the device might be doing in the background. So one frame may take 1/30th of a second, another 1/47th, another 1/60th, etc. And that’s fine. The problem arises when you want two different simulations to give identical results. In that case, the time-step used for calculations must be identical for both simulations. Physics programers call this a “lockstep” simulation, which we implemented for Osmos multiplayer. I won’t dive any deeper into this subject here, but a great resource on all this, including code examples, can be found on Glen Fiedler’s website.

You may ask: is this necessary? Won’t these calculation differences be minor? Who will notice? Well, Osmos falls into the category of sensitive systems that can produce drastically different results from slightly different initial conditions – aka the Butterfly Effect. For example: A tiny difference in mass-transfer between motes on different devices will cause them to exert slightly more or less gravity from that moment onwards, causing trajectories to vary more and more over time, until a near-miss on one device is a collision on the other – a “catastrophic” divergence. A player could die on one device but not the other. Not good.

Moving on, once you’ve implemented a lockstep simulation over a network, how do you know if it’s working correctly? Well, you could run a simulation on two devices and watch… and watch… and watch… until you maybe notice something looks different on the two displays. We did this for a little while, saw differences accumulate over time, and decided to get more rigorous. We computed a checksum / hash of the summed masses, x & y positions, and x & y velocities of all motes on the level (5 floats total), and started to send that across the network for every time-step. The devices would compare their local hash with the remote device’s hash, and if they differed we’d throw an alert up on the screen – blobiverse desync! – and dump a bunch of relevant numbers to a log file to analyze. One by one we found the divergences, and resolved them in various ways. And once we were done we were kind of amazed: devices from different generations, each running different versions of iOS – even the Xcode simulator – they all gave the same results! We had achieved simulation determinism. Huzzah!

I remember chatting with Jonathan Blow at GDC 2012 about what we were up to. We got into some technical details, and I mentioned we were using a lockstep simulation as our multiplayer solution. He made a face I can only describe as “Ugh” with a dash of “Really?” I remember saying “I know. I know. But it’s working well! We got this.”

We happily released Osmos multiplayer and never saw that error message again…

… until that final 2.3.1 build in 2013. What happened with that build? I had actually tested it a lot, and had never seen the desync. But one day right before release Dave and I were testing and – blammo – there it was. After some investigation we realized the desync only happened when playing 2.3.1 against the 2.3.0 store build. But what had changed? It turns out I had recently updated my version of Xcode. I tried reverting back to the previous version, and the problem went away. (I tested this to death.) Something in how the new Xcode was compiling our code gave different results from previous versions. Spooky. So, while Apple was still accepting builds from the older version of Xcode, we slipped it in. All went well with the release. But soon after, Apple stopped accepting builds from that version. We could no longer submit new versions of Osmos without breaking multiplayer. And for several years, we didn’t feel we needed to.

The 32-Bit App-ocalypse

Over the past half-year, Apple has gotten more aggressive about culling “unsupported” apps from the App Store, with 32-bit-only apps slowly approaching the chopping block. It’s pretty clear at this point that Apple will cut support for these with iOS 11. And so this January I rolled up my sleeves and started work on an update. I figured – worst case scenario – I might have to cut multiplayer entirely. I’m not actually sure what percentage of players play multiplayer. In any case I figure single-player Osmos is better than no Osmos.

Modernizing the Osmos project so it would build and run using the latest Xcode and frameworks took some work, but less than I expected. Ditto for 64-bit support. There were only a few glitches related to memory alignment in our rendering pipeline and network protocol. For example, here are a couple before-and-after videos I put together demonstrating the kind of alignment bugs that required squashing.

Pretty smooth work for the most part.

Desync issues aside, bigger changes were required for multiplayer. Apple’s networking and game frameworks have changed a lot over 4 years. And while I was at it I took a cue from Apple and removed all Game Center UI from the game, adding support for “background matchmaking” so players can practice/play single-player levels while waiting for a match. (There aren’t as many people playing Osmos multiplayer these days, so it can be a while before someone else comes along looking for a match.)

Hunting for Synchronicity

Of course, synchronization was the big question / risk in this update. I didn’t expect the new version of Osmos to be compatible with the 2.3.1 store build, and sure enough it wasn’t. So we’d lose backwards compatibility at least. But I wondered if this new version would be compatible with itself across different devices and OS versions, like it was in “the good old days” – specifically, 32 vs 64 bit devices. It wasn’t. Ugh. And so began the deep dive into floating-point determinism.

I tried many things. I spent a good chunk of time tinkering with compiler flags / settings. Turning off optimizations solved most of the desync issues, but I wanted to avoid that if possible. I tried flags like mfpmath and sse2, but they didn’t seem to get me anywhere, and documentation on the web with respect to those and clang is pretty thin. I revisited my understanding of floating-point math. I stared at waterfalls of numbers with many decimal places, trying to figure out where, why, and how things were diverging. I reduced the Osmos physics code to the point where nothing moved and no collisions occurred – at least that stayed in sync! I isolated the problem to the point where I had a single line of code that gave different results on 32 vs 64 bit devices for some (but not all!) input values. Simplified, it looked something like this:

mote.x += mote.vx * dt;

Simply update a mote’s x-position by its x-velocity times the time-step. For example, with

mote.x = 0.00668302644 mote.vx = 2.32162547 dt = 33/1000.0 // time-step in milliseconds

The mote’s new x-position on the iPhone 6 would be 0.0832966641 whereas it would be 0.0832966715 on the iPod 5. (A small difference, but still important.)

Were IEEE standards being ignored? No. This difference only occurred in Final Release builds, with optimizations enabled. Eventually I convinced myself it was due to compiler optimizations causing some intermediate results to be temporarily stored in double / 64-bit registers on 64-bit devices, leading the final float / 32-bit result to be somewhat different. So I tried “unrolling” some simple calculations. For example, I expanded the single line above to

float dx = mote.vx * dt; mote.x += dx;

This kind of change helped in some sections of code, but not everywhere. In some places the compiler was still optimizing / merging instructions. So, how to tell the compiler not to daisy-chain floating-point calculations? Well, as someone who is absolutely not a compiler expert, I came across a neat trick: the somewhat esoteric volatile keyword. Rewriting the above code as

volatile float dx = mote.vx * dt; mote.x += dx;

tells the compiler to rewrite the result (as a float) to the dx variable as soon as it’s calculated, and not to use any intermediate / higher-level registers. It’s a nice, code-local solution to the problem that can be applied in a very precise way where needed. I ended up having to do this to about 30 different blocks of code here and there in Osmos. It lengthens those sections of code (in some places from 10 lines to 40 lines of code), giving it more of an assembly-language style, but it works.

Unfortunately that wasn’t the one, magic bullet that solved everything. It took me a while to track down the last couple sources of divergence, and they turned out to be the sqrt() and some trignometry functions. (Osmos is all about circles after all.) When compiler optimizations are enabled, these both give slightly different results for some inputs. For example, acos(0.830012262) returns 0.591666639 on my iPhone 6 and 0.591666698 on my iPod 5. Volatile doesn’t help with this, so I tried rounding the results to the nearest degree, throwing away a bunch of precision, but giving indistinguishably-different results – totally fine so long as results match across devices. That worked. 99.999% of the time. Turns out every once in a while – hours of play on average – the results would end up on different integer boundaries after rounding. Ouch. Rounding can be a more complex operation than you might think, but it’s a solvable problem when there’s a ground truth you’re looking for, like the nearest integer to a given value. But when inputs are different, neither device in isolation has enough information to always come to the same result as the other. I lost days to that one, with much fun staring at streams and streams of tiny decimals. The solution? I went back to some basic circular geometry and came up with some new equations that would give a decent approximation of mote-mote area-overlap without the use of any trigonometry functions. The new approximation always underestimates the overlap, but that’s ok since the mass transfer generally gets spread across multiple time-steps anyways. I didn’t notice the difference, and I doubt anyone else would either.

With that, and after tons of testing, I think Osmos 2.4.0 is solid on this front. All seems good after a few days in “the wild” as well. Can I guarantee there aren’t any super-rare divergences remaining? Nope. Hopefully people will let me know if they ever see it occur.

TL;DR

Overall I spent nearly 4 months working on this update. Most of that was on multiplayer, with one month of that spent in the rabbit hole of floating-point determinism. I hope this blog post helps others avoid some of that pain.

To summarize: Lockstep synchronicity got you down?

- Try unrolling your calculations, assembly-style, and using the volatile keyword.

- Watch out for trig and other math functions. Sometimes they give the same results; sometimes they don’t.

- Don’t try rounding to solve your problem. It’s futile and just makes the problem rarer and harder to track down.

- Make sure you test with optimizations on.

Moving forward I’m curious if a future version of Xcode will again break our synchronization, or if we’re now more-or-less future proof. Time will tell.

ps. I could go on a lot longer on this and many other subjects related to Osmos multiplayer. If you find this blog post useful and/or interesting, please let me know. It’ll motivate me to blog more than once per year! ;-)

9 Comments

Tikitu

May 9, 2017Hey, I’d love to hear more about the development process! I can’t honestly say it will be useful (I’m an iOS dev but not in games and not likely to do anything real-time-ish or graphics-heavy in the near future) but it’s definitely interesting!

Ed Powley

May 10, 2017Interesting article! Just wondering if you ever considered switching to fixed-point calculations in the simulation? Integer math is deterministic so it would sidestep the issue.

Dave Knott

May 10, 2017Interesting post, Eddy!

A few comments…

The “daisy-chaining” problems that you describe may not be solely due to the compiler’s choice of registers. When the compiler sees code like this: “mote.x += mote.vx * dt;”, it may decide to fuse the multiply and add into a single instruction (assuming that your CPU architecture has such an instruction). The nasty thing about fused mutiply-and-add instructions is that, because they are not part of the the IEEE floating-point standard, they are not required to produce identical results across different CPUs. You can usually tell the compiler to avoid using fused multiply-and-add by turning off “fastmath” (which I assume you did ;-)

Also, I wonder if instead of using “volatile”, you could insert a memory barrier between the multiply and add in the expanded code, thus forcing the compiler to use specific registers in order to enforce synchronization. Just a thought…

Finally, I assume that floating point determinism is also the reason why you’re not using SSE or Neon ?

We’ve found that SIMD instructions can sometimes play fast-and-loose with the IEEE floating point standard, which causes a lot of the same kind of problems that you describe. That’s why we maintain two versions of our linear algebra libraries. One that is SSE optimized, and the other not. Multiplayer code that requires deterministic results (e.g. NPC pathfinding) is always written with the non-SSE-optimized version.

Phil

May 10, 2017Insightful writeup! Thanks!

What’s the “ugh” justification for using lock-step? Is there some alternative (short of remote sim with regular full-state updates) that would have avoided this (or other) problem(s) had you not been using lock-step?

eddybox

May 11, 2017Thanks for the thoughts and feedback, guys!

@Ed: We thought about it, but

1. We were adding to an existing codebase where everything was already floating point. It would have required a lot of changes back in the day to make the switch, and these floating-point issues (different results on different devices) didn’t occur back then once we’d set things up correctly.

2. Osmos has an extreme range of scales; some things can be teeny-tiny, and some can be huge. That includes very slow, drifty velocities, tiny & huge masses, and even time increments due to the ability to warp its flow smoothly. (Though we do disallow time-warping in multiplayer.) That’s a big range to cover with fixed point! Hard to say for sure without trying it of course, but I suspected it wouldn’t feel very good.

@Dave: Good point about the multiply-and-add. I had heard such things were possible (I vaguely & inaccurately alluded to that in my “higher-level registers” mention), though I don’t actually know if that’s what was happening here. As for fastmath, we had optimizations set to -Os as opposed to -Ofast (which enables fast math under the hood) and we had “Relax IEEE compliance” set to No. I’m not sure what else Xcode/clang might do under the hood though.

Memory Barrier: I had never head of that before, thanks! I just read a bit about them, and yeah, I think that would have worked as well.

And yes, I avoided SSE/Neon. Osmos physics is 2D so it didn’t seem worth it. Good to know about it being fast and loose with IEEE, thanks. That said, as I was writing this I came across the RSQRTSS / vrsqrts_f32 function, which may be a workaround for sqrtf() differences…? Not sure.

@Phil: Haha, you’d have to ask Jon to be sure, as I don’t want to put words in his mouth. That said, he didn’t actually suggest doing it differently. Maybe internally he agreed this was the way to go. (And yeah, what else could we do given my fixed-point thoughts earlier in this comment?) I imagine he just understood how delicate / precarious a solution like this could be, and didn’t envy the task. ;-)

Dave Knott

May 11, 2017I think that NEON and SSE have gotten a lot better about respecting the IEEE standard in recent years, so maybe it is less of an issue these days?

With regards to transcendental SIMD functions like vrsqrts_f32, they are typically not very accurate. Usually it is recommended to start with that function, followed by one or two iterations of Newton-Raphson.

You will usually see a performance benefit by using SIMD, even for 2D simulations.

The main drawback is that your data will need to be restructured to take advantage, and then you need to refactor your simulation math to use the new data structures. When moving to SIMD parallelization, the big up-front decision that needs to be made is whether to arrange your data as structure-of-arrays (SOA) vs array-of-structures (AOS).

Think about it for Osmos 2 ;-)

By the way, you are right about floating point rounding being a pain in the ass. I spent a *lot* of time debugging problems in our math library unit tests that could ultimately be traced back to subtle issues related to rounding.

Daniel Weiner

May 19, 2017This was really interesting! Please do more posts like this.

David Cantrell

May 24, 2017As an alternative to requiring simulation to work perfectly for ever, did you consider a scheme where you’d send player input across the network most of the time, with a full 7K dump of the universe every so often? A bit like how MPEG video has occasional “key frames” where the entire image gets transmitted with just inter-frame diffs the rest of the time. That way you would stand a good chance of recovering from simulation errors without anyone noticing.

eddybox

May 26, 2017Very interesting suggestion, David, thanks! In practice that’d be hard to implement, since by the time a device got the 7k dump from the remote device, the simulation would have moved on (between 3-15 time-steps, depending on the current ping). So we’d have to compare the dump to a historical snapshot, and if they’re different, we’d have to rewind and re-simulate forward from that point to the present. If all goes well / if the differences are very minor, the player wouldn’t notice a thing, which would be great, but the physics simulation in Osmos is expensive (a lot of moving bodies, forces, and collisions per frame) so it might take a while for the catchup calculations; if there are were frequent micro-divergences, we’d have to do that often, which might seriously hurt framerate. We could try to amortize all those calculations across frames, but yeah… all that to say, it’d be far from trivial to implement a solution like that for Osmos, and could lead down its own rabbit-hole. A cool suggestion though – and if it wasn’t for the lag between the dump and the present (or if rewinding wasn’t so costly) that’d be a great and relatively easy way to recover!